|

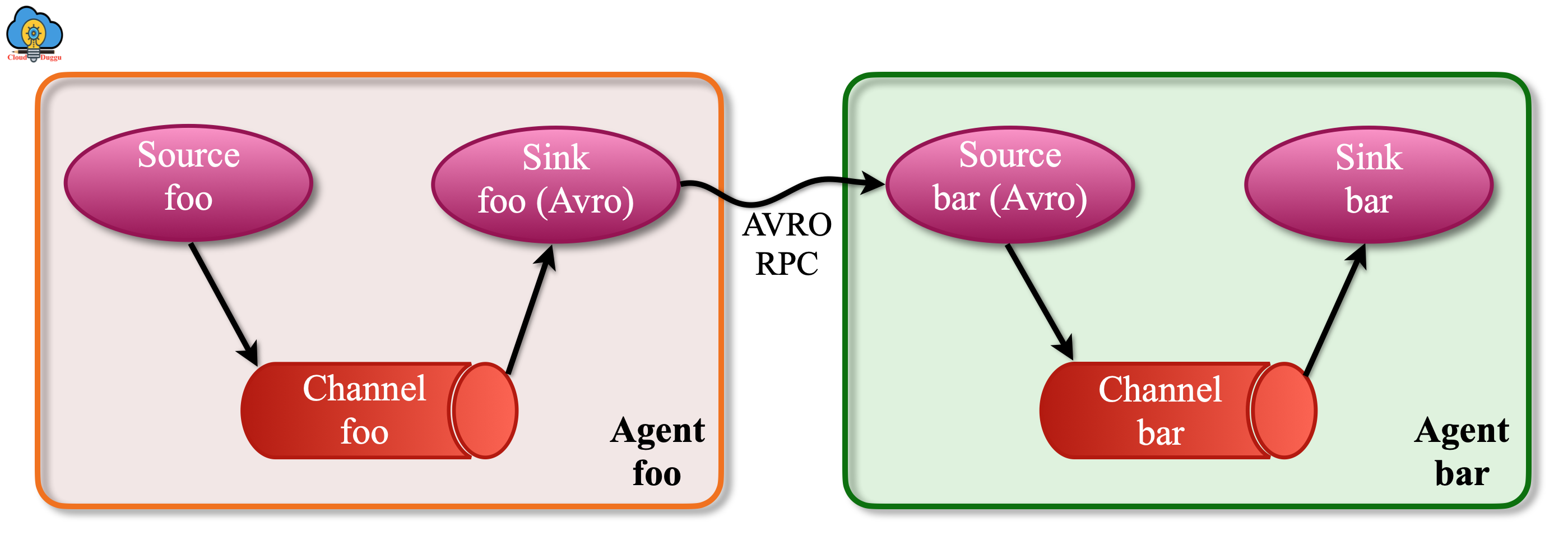

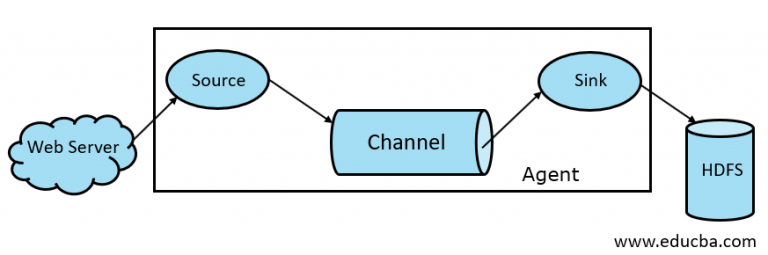

Providing this information is straightforward Flume’s source component picks up the log files from the source or data generators and sends it to the agent where the data is channeled.

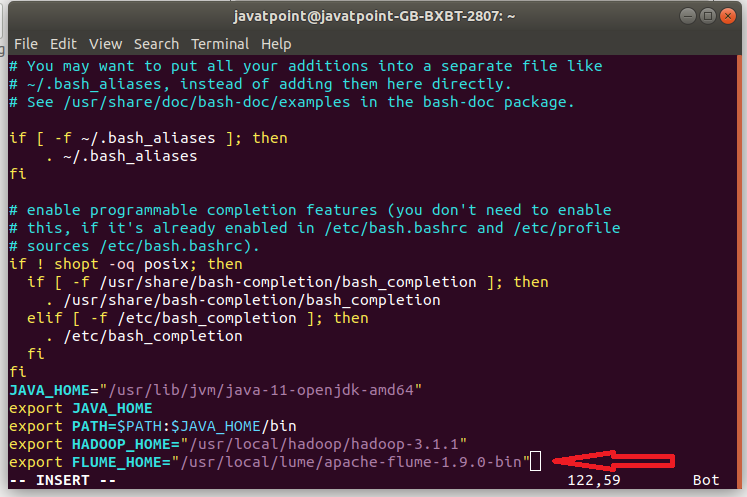

To stream data from web servers to HDFS, the Flume configuration file must have information about where the data is being picked up from and where it is being pushed to. The process of streaming data through Apache Flume needs to be planned and architected to ensure data is transferred in an efficient manner. Streaming Data with Apache Flume: Architecture and Examples Flume can connect to various plugins to ensure that log data is pushed to the right destination. There, the log files can be consumed by analytical tools like Spark or Kafka. Flume’s Features and CapabilitiesĪpache Flume pulling logs from multiple sources and shipping them to Hadoop, Apache Hbase and Apache Sparkįlume transfers raw log files by pulling them from multiple sources and streaming them to the Hadoop file system. They can size up to terabytes or even petabytes, and significant development effort and infrastructure costs can be expended in an effort to analyze them.įlume is a popular choice when it comes to building data pipelines for log data files because of its simplicity, flexibility, and features-which are described below. These log files will contain information about events and activities that are required for both auditing and analytical purposes. Organizations running multiple web services across multiple servers and hosts will generate multitudes of log files on a daily basis. Facebook, Yahoo, and LinkedIn are few of the companies that rely upon Hadoop for their data management. A number of databases use Hadoop to quickly process large volumes of data in a scalable manner by leveraging the computing power of multiple systems within a network. HDFS is a tool developed by Apache for storing and processing large volumes of unstructured data on a distributed platform. HDFS stands for Hadoop Distributed File System. Later, it was equipped to handle event data as well. Initially, Apache Flume was developed to handle only log data.

There, applications can perform further analysis on the data in a distributed environment. The History of Apache FlumeĪpache Flume was developed by Cloudera to provide a way to quickly and reliably stream large volumes of log files generated by web servers into Hadoop. In addition to streaming log data, Flume can also stream event data generated from web sources like Twitter, Facebook, and Kafka Brokers.

It facilitates the streaming of huge volumes of log files from various sources (like web servers) into the Hadoop Distributed File System (HDFS), distributed databases such as HBase on HDFS, or even destinations like Elasticsearch at near-real time speeds. Get Started with ELK and Logz io - Shipping, Analyzing and Visualizing Your LogsĪpache Flume is an efficient, distributed, reliable, and fault-tolerant data-ingestion tool.Challenge Met: Adopting Intelligent Observability Pipelines.

0 Comments

Leave a Reply. |

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed